The Missing Layer in AI: Meaning, Discipline, and Human Use

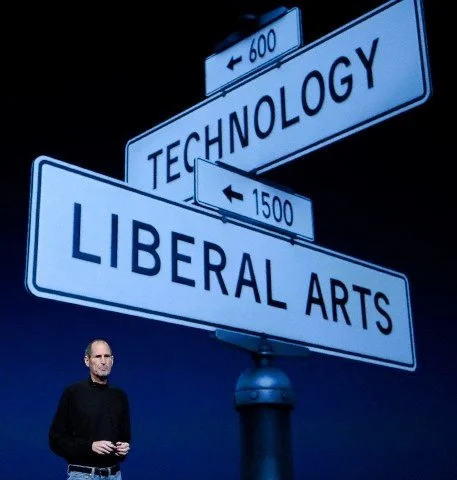

“Bicycles for the mind” is one of Steve Jobs’ most striking metaphors, coined at a time when people could not yet understand what a personal computer was. When asked, “How will an average person learn how to type on a keyboard?”, he famously replied, “Death will eventually take care of it.” After being ousted from Apple, Jobs created the NeXT computer, which included advanced networking capabilities and ultimately became the machine Tim Berners-Lee used at CERN to build the World Wide Web. Jobs built not only powerful platforms; he also understood that the true application of technology rests with artists and developers, two communities that often fail to understand or value each other’s contributions. Pixar became the rare exception where art and engineering aligned to produce some of the greatest animation cinema ever made.

What Jobs consistently did right was to build tools that ordinary people could use productively, while simultaneously cultivating disciplined ecosystems where developers could extend those tools. He built capability and comprehension together. He created power and usability.

With AI, we are missing that pairing. Everyone is chasing outcomes, particularly in fields like medicine, while becoming hypnotised by impressive outputs. Strength is mistaken for maturity. Capability is mistaken for readiness. Many do not yet know how to harness AI as an infrastructure of thought, rather than as a spectacle of computation.

Our understanding remains constrained by what we know and what we assume, and AI is no different. The technology underpinning it is arguably the most advanced system humanity has developed. Its applications are enormous, and many of the most meaningful uses likely sit in the “unknown unknowns”. Yet institutions are meeting this epochal shift with a familiar pattern: anxiety, misunderstanding, and performance.

Universities and governments are struggling not because they are malicious, but because historically they have been slow to integrate new technologies into serious decision-making. Transitional periods have always existed around emerging technologies, but today the response is amplified by incentives that reward compliance, optics, and risk-avoidance over measurement and learning. The result is slop, meaning unverified outputs, inflated claims, and policy written to signal action rather than improve outcomes. That combination demands a rethink of how we build, govern, and integrate AI.

AI needs a public interface to its own power. At present, we have capability without culture, and systems without a shared grammar. People approach AI as a chatbot, an oracle, or a searchable brain, and none of these metaphors is sufficient. Without a clear mental model, societies oscillate between blind reverence and lazy dismissal. We also lack what Jobs intuitively created: a collective “developer conference” moment for civilisation. Not an event, but a disciplined pattern; a space where capability is demonstrated alongside constraint, where limits are explained openly, and where responsible use is taught with as much seriousness as innovation. The missing layer is not invention. It is meaning, standards, pedagogy, and craft.

Jobs captured the missing ingredient in one line: “Technology alone is not enough; it’s technology married with the liberal arts, married with the humanities, that yields us the results that make our hearts sing.” That is exactly what the AI moment lacks. We have astonishing capability, but too little of the human discipline that turns capability into judgment, usability, and trust. Without that marriage, we will keep mistaking fluent output for understanding, and speed for wisdom.

This “slop” problem is not a moral failure. It is an incentive failure. If institutions reward speed, volume, and plausible language, AI will produce speed, volume, and plausible language. If we instead reward measurement, reproducibility, accountability, and verifiable contribution, AI will reshape itself accordingly. AI mirrors what we value. It multiplies whatever systems select for.

The real frontier is not whether AI will replace specific professions. The real frontier is that AI will change the cost of thinking. When thinking becomes cheaper, the bottleneck shifts. Judgement becomes scarce. Taste becomes scarce. Verification becomes scarce. The institutions that succeed will be those that embed verification natively rather than treating it as a corrective layer, and that reserve human expertise for stewarding decisions rather than supervising mechanical processes.

This is also where the SCL AI Agent, built by Professor Bruce Mountain and Shruti Kant at the VEPC in Melbourne and grounded in the lifetime work of Professor Stephen Littlechild, fits, at least philosophically. If the goal of AI is not merely to produce text but to discipline reasoning, then fluency is not the benchmark. The benchmark is whether it helps us see further, ask better questions, and make fewer confident mistakes. If we fail to design AI around those principles, we will continue generating impressive outputs while achieving shallow progress.

If Steve Jobs were here, I doubt he would be dazzled by AI’s output. He would obsess over its interface. He would ask: Where is the elegance? Where is the discipline? Where is the simplification that allows ordinary people to do extraordinary things? He would not romanticise AI as “intelligence”. He would frame it as a new material. And he would insist that we build the moral, cultural, and technical layers required to make it truly human-usable.

Jobs believed that technology is at its best when it disappears into human intention. He would look at AI today and see a product half-finished: too loud, too spectacular, too undisciplined. He would challenge us to choose between another fleeting parade of demos or the hard work of building civilisational infrastructure. He would demand coherence rather than chaos, craftsmanship rather than performance.

And perhaps his deepest lesson is this: Jobs never built the future because it was fashionable. He built it because he believed ordinary people deserved extraordinary tools. AI will meet its promise only if we hold ourselves to that same standard. Not AI for its own sake. Not AI because everyone else is doing it. But AI built patiently, deliberately, with courage, taste, humility, and the stubborn belief that technology should expand human possibility rather than overwhelm it.